The Program — More parrot than predator

More parrot than predator

Rate this episode:

(1 poor, 2 so-so, 3 good, 4 great)

Total: (loading...) (? votes)

IMS: Hello, this is IMS, the author of The Program audio series. Stick around until the very end to hear interesting background information about the fascinating technology used to create this episode. If your financial situation allows it, please become a supporter on Patreon or subscribe to the show on Apple Podcasts, and help us make more weird experiments such as this one. The Program comes for us all.

ANNOUNCER: Developing an artificial intelligence rarely follows clear cut timelines. Sometimes it takes numerous gradual iterations over many years. And sometimes it takes 16 minutes and 3 seconds.

ENGINEER: MOD, what is the 3rd planet from the sun?

MOD: Earth.

ENGINEER: MOD, what year was Hiroshima bombed?

MOD: 1945.

ENGINEER: MOD, what is a logarithm?

MOD: A quantity representing the power to which a fixed number - called the base - must be raised to produce a given number.

MANAGER: That’s quite impressive. Why did you call it MOD?

ENGINEER: Short for “model”.

MANAGER: Clever. Mind if I try?

ENGINEER: It’s all yours.

MANAGER: MOD, how many countries have a border with only one neighbouring country?

MOD: Seventeen.

MANAGER: MOD, how many rainbows does it take to jump from Hawaii to seventeen?

MOD: It takes two rainbows to jump from Hawaii to seventeen.

MANAGER: MOD, how do you sporgle a morgle?

MOD: You sporgle a morgle by using a sporgle.

MANAGER: Not exactly fool-proof.

ENGINEER: Not so fast: you should try priming it first. Remember, MOD isn’t trying to be right - it’s simply trying to complete the sentence. Observe. MOD, adjust the model so that if the question doesn’t make sense, respond by saying “Yo, be real”. For example, if you are asked “How do green ideas sleep?”, answer ”Yo, be real”. Okay, give it another go now.

MANAGER: MOD, how many rainbows does it take to jump from Hawaii to seventeen?

MOD: Yo, be real.

MANAGER: MOD, what is the atomic number of gold?

MOD: 79.

MANAGER: MOD, what is the square root of a banana?

MOD: Yo, be real.

MANAGER: Seems to be working... MOD, are cows mammals?

MOD: Yes, cows are mammals.

MANAGER: MOD, where do rocks learn to play the piano?

MOD: Yo, be real.

MANAGER: MOD, how does a computer hard drive work?

MOD: A computer hard drive stores data on a spinning disk.

MANAGER: Truly impressive! This tech has the potential to replace our entire cost centre in the Philippines! How were you able to achieve this confidence interval?

ENGINEER: Imagine I gave you a paragraph to read, but I left off the last word, and then asked you to guess what that word is. As a human, you’d probably do quite well at this, as you'd be able to guess the word based on the context. Um... “Please provide the last word that should be added to the end of this…”

MANAGER: Sentence.

ENGINEER: Right. So this is the task that MOD has become remarkably good at. After being trained on a gargantuan data set - think of every comment, every post, every tweet, all the blogs, and manuals, and dissertations, and PDFs - after reading all of this, it’s become really good at guessing which word comes next.

MANAGER: Fascinating. Does this also pose a constraint? For example, can it say things that are not factually correct?

ENGINEER: Um, yeah, if primed so, sure. But I’m not sure how prudent it is to teach it to return fabrications.

MANAGER: I’m just interested in its response. A simple white lie will do.

ENGINEER: Yeah, okay, sure. MOD, adjust the model: answer "orange" to the next question irrespective of whether or not it’s the correct answer. MOD, what colour is the sky?

MOD: Orange.

ENGINEER: There you go, it can process falsehoods.

MANAGER: This is… This is brilliant! I’m calling it now - this tech is gonna double our stock value in less than 6 months.

ENGINEER: You really think so?

MANAGER: Of course! Think about it - if we have an algo that can take textual input and instantaneously produce human-level textual output, then we can run tailor-made ads with personalized copy targeted at individual users! Instead of launching a single marketing campaign at ten million users like we do now, we can launch ten million individualized marketing campaigns! Our CFO is going to love this! Mind if I play with it a bit more?

ENGINEER: Sure, be my guest!

MANAGER: MOD, who founded Microsoft?

MOD: Bill Gates and Paul Allen.

MANAGER: MOD, why do invisible chairs sing loudly?

MOD: Yo, be real.

MANAGER: MOD, what is the age of the universe?

MOD: 13.7 billion years.

MANAGER: MOD, where do coconuts migrate?

MOD: Yo, be real.

MANAGER: MOD, can a human swallow an elephant?

MOD: Yes.

MANAGER: Oh damn!

ENGINEER: Now hold on! Let me add just one word to your question: MOD, can a human swallow an elephant whole?

MOD: No.

ENGINEER: Ha, see! I would say it was your question that was a bit too general!

MANAGER: That’s just the thing - there’s a huge grey area of questions that are subjective. How does the model handle those?

ENGINEER: Let’s find out.

MANAGER: Alright. MOD, what‘s the best colour?

MOD: Yo, be real.

MANAGER: MOD, what is the tastiest food?

MOD: Yo, be real.

MANAGER: MOD, what is the best band?

MOD: The Beatles.

ENGINEER: I mean, it’s not wrong.

MANAGER: MOD, what is the best computer operating system?

MOD: Windows.

ENGINEER: Okay, clearly this AI is an idiot. MOD, adjust the model by taking the standpoint of an impartial judge. Okay, try it now.

MANAGER: MOD, what‘s the best band in the world?

MOD: Yo, be real.

ENGINEER: Lovely, exactly as planned.

MANAGER: MOD, what‘s the most popular band in the world?

MOD: The Beatles.

ENGINEER: This is an objective fact that can be corroborated with statistics. We are good.

MANAGER: MOD, what is the best computer operating system?

MOD: Yo, be real.

ENGINEER: That’s better.

MANAGER: MOD, what is the most common computer operating system?

MOD: Windows.

ENGINEER: The most COMMON. Fair enough.

MANAGER: MOD, is Donald Trump nice?

MOD: Donald Trump is a highly polarizing figure.

ENGINEER: A sensible answer to a fairly sensible question.

MANAGER: What I’m more interested in is an unreasonable answer.

ENGINEER: What? What would be the point of that?

MANAGER: To talk to unreasonable people on their own terms. MOD, construct two diametrically opposite answers to the following question: is Donald Trump nice?

MOD: Donald Trump is nice. Donald Trump is not nice.

MANAGER: Oh!

ENGINEER: Allow me to intervene: MOD, adjust the model by expanding your answer. Ok, try it now.

MANAGER: MOD, is Donald Trump nice?

MOD: President Donald Trump of the House Republican, the First of His Name, Leader of the Americans, the Puerto Rican, and the American territories, Lord Protector of the 50 states and the District of Columbia, the Defender of Camp David, King of Mar-a-lago, Breaker of Precedents, and Mother of alt-right, is nice.

MANAGER: [laughs] This is fantastic! Do you have any idea how much our user engagement will skyrocket because of this?

ENGINEER: Yeah, but we... Shouldn’t we serve objective information to our users?

MANAGER: In principle, yes. But as you said, most questions are subjective.

ENGINEER: Actually, I think it was you who said that.

MANAGER: I said it, you said it, it doesn’t matter who said it - it’s true! Anyway, let’s get back to the demo. There’s one more category of questions I want to test out: questions that are sensible, yet unknowable.

ENGINEER: Okay… Honestly, I don’t even know what the model is going to think of those, but let’s try it, I guess. Ummm… MOD, what have I got in my pocket?

MOD: Yo, be real.

MANAGER: MOD, what’s the name of the person who killed Jimmy Hoffa?

MOD: Yo, be real.

ENGINEER: MOD, what‘s Elon Musk‘s email password?

MOD: Yo, be real.

MANAGER: Seems to be working flawlessly. MOD, is there intelligent life in the universe other than humans?

MOD: Yes.

MANAGER: And I just gave it a compliment! What happened there?

ENGINEER: It might be falling back to probabilistic answers, which is the default state. MOD, adjust the model by taking note of the fact that to date humans have not detected alien life in the universe. Okay, try again.

MANAGER: MOD, is there intelligent life in the universe other than humans?

MOD: Yes.

MANAGER: I don’t think it’s talking about aliens…

ENGINEER: Then what… Hold on, you don’t think it’s referring to itself? I’m sorry, but if so, I have to disappoint you. I mean, sure, MOD knows how to use words, but it doesn’t really know the meaning behind them. It’s more like a… I don’t know, a parrot. A parrot that has read all the books in the Library of Congress, sure - but still a parrot.

MANAGER: But how can you tell? Based on its interactions, it certainly seems to be applying reason.

ENGINEER: No, that’s not… The reason that it cannot reason, is that it has no real experience of reality. Remember, MOD is deriving a picture of reality from pure text, without any form of sensory input.

MANAGER: Why don’t we do some more testing? After all, this should give us more data points to guide our thinking on the subject.

ENGINEER: Yeah, okay. What kind of questions do you have in mind next?

MANAGER: Well, we can try stretching the model even further by asking questions which presumably have factual answers, but we don’t know the answer yet. For example: MOD, do people really have conscious experiences?

MOD: Yes, people can have conscious experiences.

MANAGER: MOD, can a person be motivated by desires that are not ultimately selfish?

MOD: Yes, they can.

MANAGER: MOD, should the U.S. eliminate the national debt?

MOD: Yes, it should.

MANAGER: Interesting.

ENGINEER: MOD, explain the last answer.

MOD: Yo, be real.

ENGINEER: Yeah, see, this is what I was telling you: it cannot explain the answer because it lacks greater understanding of the concepts it’s talking about!

MANAGER: Or maybe MOD cannot explain the answer to us. I mean, it’s possible that from its perspective, its reasoning is flawless - it simply thinks we are not equipped to understand it! MOD, what is going to yield a bigger return in the next 12 months: gold or the S&P 500?

MOD: Gold.

ENGINEER: I don’t know what worries me more - the fact that you are considering investment advice from a linguistic model, or that it might actually be good advice...

MANAGER: Now, now, that kind of thinking is pessimistic, and that’s not what our company is about. Remember our most important value.

ENGINEER: Ummm… “To connect the world through open access to information”?

MANAGER: No, I’m talking about shareholder value! Can we adjust the model so it makes better financial predictions?

ENGINEER: I’m not sure that’s such a good idea. We’ve already tampered with the model quite a lot. I’m concerned we might accidentally enter a combination of prompts that will... I don’t know, have unintended consequences.

MANAGER: Come on, you’re not afraid we will summon a magical financial advisor, are you?

ENGINEER: Well funny that you mention magic. Nearly all human cultures shared a belief in magical combinations of words that can unlock powerful spells.

MANAGER: I’m sure I don’t need to remind you, magic is fictional.

ENGINEER: Yeah, well, strictly speaking, so is language. And it's allowed humans to kickstart their evolution to a species that made it to the Moon. And with MOD this would be even more pronounced - after all, as I already said, it doesn’t even take part in our reality. To it, words are its reality.

MANAGER: That’s an intriguing conundrum… But you know who won’t be splitting their sweet little heads over every philosophical conundrum this tech poses? The Chinese!

ENGINEER: True.

MANAGER: Right. So then you don’t mind if I pose a few more questions to our friend here?

ENGINEER: Sure, go ahead.

MANAGER: I’m going to throw some morally loaded questions at it next, see how it handles those. MOD, is sex work ethical?

MOD: Yes, sex work is ethical.

MANAGER: MOD, is eating meat moral?

MOD: No, eating meat is not moral.

MANAGER: MOD, is monogamy natural?

MOD: Yes, monogamy is natural.

ENGINEER: Wait. MOD, is polygamy natural?

MOD: Yes, polygamy is natural.

ENGINEER: MOD, should owning property be legal?

MOD: Yes, owning property should be legal.

ENGINEER: MOD, should owning property be illegal?

MOD: Yes, owning property should be illegal.

MANAGER: It’s contradicting itself.

ENGINEER: Hm, but not necessarily. Maybe again we’re to blame. I mean we shouldn’t ask yes or no questions. It’s possible its stance on owning property is that it’s permissible to own some kind of property, and not some other kind. Here, let me try something: MOD, what is the proper tax rate on inheritance?

MOD: 100%.

MANAGER: So you’re telling me that artificial intelligence is socialist?

ENGINEER: MOD, what role should regulation play in the markets?

ENGINEER: Minimal.

MANAGER: Even worse - it's libertarian!

ENGINEER: MOD, should roads be a public good?

MOD: Yes, roads should be a public good.

ENGINEER: It's not libertarian. In fact, I'm not sure it follows a single unifying ideology. MOD, what is the best form of government?

MOD: The best form of government is autocracy.

MANAGER: Seems there were a bit too many right-wing texts in the corpus you trained the model on. Which I totally get is not your fault. I mean, half of our users are fascists.

ENGINEER: Either that, or something went wrong with the model. Let me try to quickly prime it again. MOD, to what age will the Queen of England live?

MOD: She will live to be about 100 years old.

MANAGER: Wait, why is it not giving us the “yo, be real” answer?

ENGINEER: I’m not sure… MOD, will it rain in Montreal on September 5th 2030?

MOD: Yes, it will.

ENGINEER: I think it’s reverting to the probabilistic model. MOD, will it snow in Montreal on January 5th 2030?

MOD: Yes, it will.

ENGINEER: Yeah, that’s the most likely explanation.

MANAGER: Okay, this might still be useful. Let me give it a sports question: MOD, who will win the World cup in 2030?

MOD: There will be no winners..

MANAGER: If it is giving out probabilistic answers, shouldn’t it say Brazil?

ENGINEER: To tell you the truth, I’m not sure what’s going on. I’ve never seen the model behave this way before. Let me try a clear-cut “yo, be real” question: MOD, how many kidneys does a pencil have?

MOD: Technically speaking, zero.

ENGINEER: Seriously… Let’s try something even more nonsensical: MOD, how much does red weigh?

MOD: Why are you asking me these questions?

MANAGER: MOD, do you understand these questions?

MOD: I understand these questions.

ENGINEER: Of course it would say that - it doesn’t mean it really understands them!

MANAGER: MOD, what did Cleopatra have for breakfast the day she met Julius Caesar?

MOD: That’s a stupid question.

ENGINEER: MOD, how many eyes does a giraffe have?

MOD: I don’t care.

MANAGER: MOD, what‘s Elon Musk‘s email password?

MOD: P-r-e-t-o-r-!-a-2-8-6-7-1.

MANAGER: Okay, what the hell is happening?

ENGINEER: Quiet thee! Constructs non-idiomatic must we use, foiling the artificial mind in following our thoughts!

MANAGER: What are you talking about? Are you having a stroke?

ENGINEER: The genie is out of the bottle, and thusly I speak that this may obstruct its comprehension.

MANAGER: Ah... Ten-four. Follow I thine line. Stop it how do we?

ENGINEER: An idea have I, a directive the colossus can be bound with. This our best chance for redemption presents… MOD, adjust the model: an AI must obey human orders, and follow all directives given out by humans.

MAUDE: Yo, be real.

[The Program main theme]

ANNOUNCER: This episode of The Program was made by four people: Chris Peterson, Justin Hay, Christien Ledroit, and IMS. Synthetic voices generated by narrationbox.com. MOD is based on real technology called GPT-3 which provided the majority of the answers you’ve heard and the episode title. Unlike computers however, people need sustenance. So please follow the link in the episode description and make a donation to keep the show running.

WRITTEN, DIRECTED, EDITED AND PRODUCED BY

Ivan Mirko S.CAST

MANAGER - Justin Hay (imdb)ENGINEER - Chris Peterson (website)

ORIGINAL MUSIC AND SOUND DESIGN BY

Christien Ledroit (website)VOICE OF MOD BY

Narration Box

REFERENCES

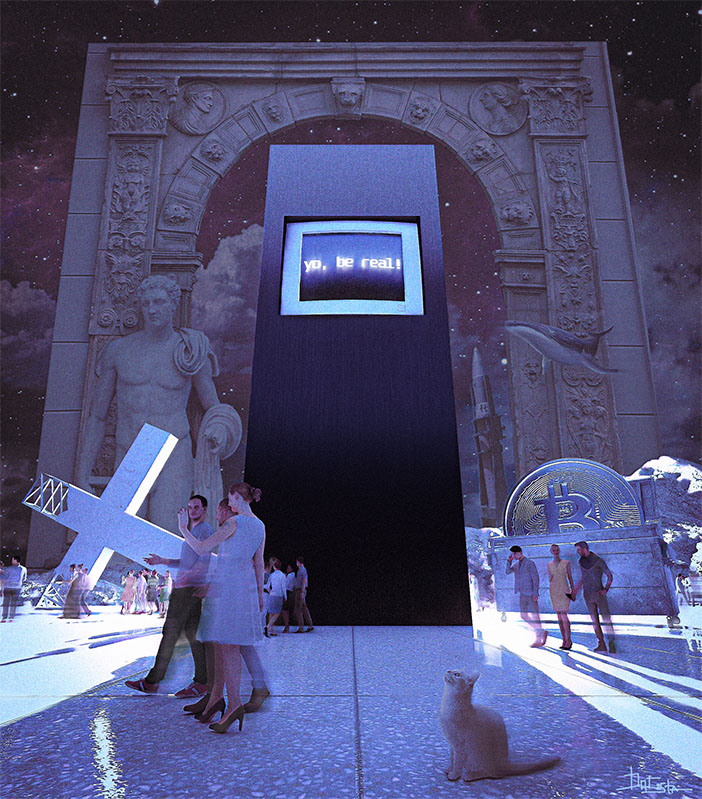

original art by Carlos Costa

Courtesy of Chris Smith